ALKIS TOUTZIARIDIS – FEBRUARY 13TH, 2024

EDITOR: ANNE WU

Introduction

Artificial intelligence, once a speculative concept, has quickly become a dominant force in the technological landscape. In just two years, AI-driven tools like ChatGPT and Google Bard have moved from experimental to essential, weaving their way into our work, homes, and even our social media feeds through an endless stream of memes.

Similarly, OpenAI’s DALL-E, an AI program capable of generating detailed images and art from textual descriptions, has sparked a global conversation about the creative possibilities — and potential pitfalls — of AI.

As these technologies continue to advance, the conversation inevitably turns to regulation. There has been a growing awareness that the pace of AI innovation must be matched with thoughtful governance to ensure it serves the public good while minimizing harm.

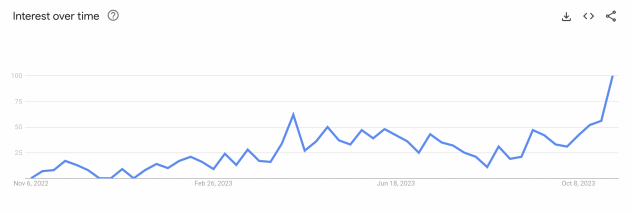

In this article, we’ll investigate the current state and future prospects of AI regulation. By looking at existing and proposed policies, we’ll shed light on how these rules are being shaped around the world and what this means for the future of AI development and its integration into society. Highlighted by the Google Trends graph for the term “AI regulation” presented below, we’ll see the clear trajectory of growing public and political interest in AI regulation — a signal that the conversation is only just beginning (see: Figure 1). Overall, this article aims to provide a clear view of how the global community is grappling with AI’s rapid rise and how it can be manipulated to produce the best outcome for all.

Why AI should be regulated

The question of whether or not AI should be regulated is not one of possibility but of necessity. In the absence of regulation, there is a real risk that AI could evolve in ways that are misaligned with human values and societal norms, leading to unintended consequences. The unparalleled efficiency and autonomy that AI systems can achieve make them both incredibly useful and potentially dangerous. For instance, in critical applications such as autonomous vehicles or diagnostic systems, failures could have dire consequences, underscoring the need for standards that ensure safety and reliability.

Politically, AI regulation becomes a balancing act between ensuring ethical use and avoiding the creation of a bureaucratic wall that could hinder a nation’s agility in technological advancement. Advanced AI regulation also raises issues of data sovereignty and privacy concerns, shaping international relations and trade negotiations as different countries’ regulatory frameworks reflect divergent political ideologies and strategic priorities. Domestically, regulation impacts labor markets and could become a politicized issue; the push for regulation may be viewed as either protecting jobs from automation or an impediment to economic growth and efficiency.

Moreover, the potential for AI to be used in developing autonomous weapons adds an urgency to the debate. These weapons could operate without human oversight, making life-and-death decisions on the battlefield, and posing significant ethical and security concerns. Preventing uses that could be detrimental to humanity, alone, is a compelling case for the regulation of AI.

However, it is also crucial to consider that over-regulation may stifle innovation and the development of beneficial AI technologies. The regulation should not be about hindering AI, but rather about guiding its development in a direction that maximizes benefits while minimizing risks. Therefore, a balanced approach is needed — one that imposes necessary safeguards without constraining the creative and beneficial aspects of AI research and development.

Thus, the question is not “Should we regulate it?” but rather “Who should regulate it, and how?”

The global regulatory landscape

The European Union’s AI Act presents a forward-thinking framework aimed at safeguarding fundamental rights while nurturing innovation. It especially scrutinizes “high-risk” AI applications, demanding rigorous compliance to ensure their safety and ethical deployment. Simultaneously, the Council of Europe’s AI Treaty is pioneering international norms, striving to embed AI within the boundaries of human rights and democratic values. While the treaty sets a commendable standard, the diversity in ratification and enforcement poses a challenge for its universal adoption and effectiveness.

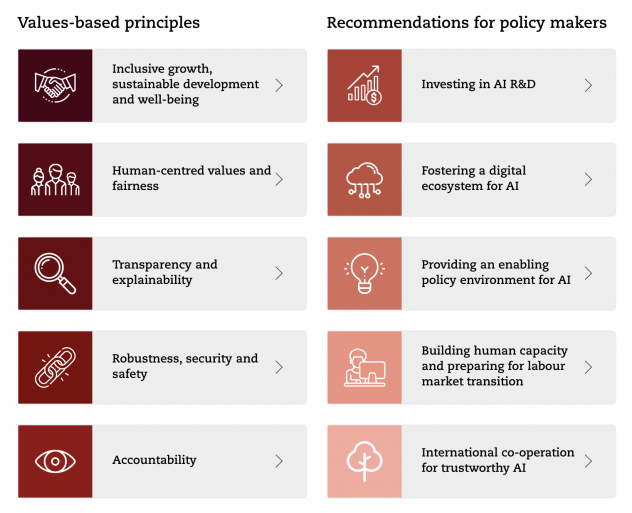

On a global scale, the Organisation for Economic Co-operation and Development (OECD) AI Principles lay down the ethical groundwork for AI, championing principles of transparency and accountability, although they rely on member nations to integrate these into their respective legal frameworks (see: Figure 2). Complementing these efforts, the Global Partnership on AI (GPAI) acts as a multilateral forum, uniting member countries in the pursuit of AI that upholds human-centric values and fosters international collaboration in AI policy and research.

Industry perspectives on AI regulation: the IEEE 1012 standard

The Institute of Electrical and Electronics Engineers (IEEE) 1012 standard, also known as the IEEE Standard for System, Software, and Hardware Verification and Validation, is a well-established framework that outlines the steps and processes necessary for the verification and validation (V&V) of systems, including software and hardware components. Its approach to risk assessment involves identifying potential issues that could affect system performance or cause system failure, evaluating the severity and likelihood of these risks, and implementing measures to mitigate them.

Historically, IEEE 1012 has been applied across various sectors that involve complex systems and software, including aerospace, defense, and medical devices. It has been particularly valuable in environments where safety and reliability are paramount, as it provides a structured methodology for ensuring systems operate within their defined specifications and are free from critical errors.

In terms of AI regulation, IEEE 1012 serves as a roadmap for addressing the multifaceted risks associated with emerging AI technologies. Its rigorous verification and validation approach can be adapted to AI systems, where the identification of risks must include the potential for biased decision-making, unpredictability of machine learning models, and other AI-specific concerns. By following the IEEE 1012 standard, regulators and industry practitioners can systematically assess and control the risks inherent in AI applications, paving the way for safer and more reliable technology deployment.

A multi-tiered approach to AI risk management

Determining risk levels

In line with the IEEE 1012 standard, risks within AI systems are categorized based on their impact and probability. This risk-based categorization enables a tailored application of V&V processes. For low-risk AI applications, the V&V process might be less stringent, focusing on basic functionality and performance. In contrast, high-risk applications, such as those impacting human health or safety, would be subject to a more thorough V&V process, possibly including extensive testing, simulations, independent reviews, and real-world monitoring.

The standard advocates for a multi-tiered approach to managing these risks. It involves not just initial V&V but also ongoing monitoring and reevaluation as the system evolves. Given the dynamic nature of AI learning and decision-making, this continual reassessment is crucial for maintaining integrity and trustworthiness.

Policy implications

Policymakers can leverage the IEEE 1012 standard as a template for crafting targeted regulations for AI applications. By employing its risk-based approach, regulations can be designed to impose more stringent oversight and mandatory V&V processes on high-risk AI technologies, such as autonomous vehicles and medical diagnostic tools, while allowing for a more flexible approach for lower-risk categories.

This nuanced approach ensures that the burdens of compliance are proportionate to the level of risk involved. Moreover, policymakers can recommend that AI developers and users adopt IEEE 1012 V&V processes as part of their internal risk management practices, ensuring a baseline quality and safety standard across the industry.

Additionally, policymakers can encourage the evolution of the IEEE 1012 standard itself, to integrate more AI-specific considerations and stay abreast of the rapidly evolving landscape of AI technologies. By doing so, they can help create a regulatory environment that not only protects consumers and the public but also fosters innovation and growth within the AI sector.

Strategies for effective AI governance

International cooperation is crucial in developing AI regulations due to the inherently global nature of technology. Aligning with standards through multilateral organizations, such as the OECD, facilitates the establishment of universally accepted norms and practices. This coordination ensures that AI operates within a framework that is consistent and predictable across borders, enhancing global compliance and interoperability.

Regulatory frameworks must be designed to be flexible, yet sufficiently robust, to maintain oversight. Regulations should be adaptable to accommodate the pace of AI innovation while establishing mechanisms for preemptive risk management. A balance must be struck where the regulatory environment can evolve in tandem with technological advancements, providing a stable foundation that supports both development and safety.

Lifecycle oversight

Moreover, a comprehensive regulatory approach should encompass the entire lifecycle of AI systems. This includes rigorous evaluation from the development phase through deployment and retirement. By instituting a continuous oversight process, regulators can identify and mitigate risks at each stage, ensuring ongoing compliance and addressing potential issues proactively.

Independent review mechanisms are integral to the governance of AI, ensuring that AI systems are reliable, safe, and in line with established regulations. Third-party audits and assessments provide an objective evaluation of AI technologies, reinforcing accountability and public trust. The scrutiny from independent entities is vital for the verification of compliance and the detection of deviations from required standards.

Case studies and examples

The European Union’s approach to the General Data Protection Regulation (GDPR) has set a precedent for AI regulation by addressing automated decision-making and profiling, offering a level of transparency and control to consumers. The GDPR’s impact assessment requirement has encouraged companies to proactively manage AI risks.

In contrast, the United States has faced difficulties in establishing a cohesive national framework due to its sectoral approach to privacy and data protection, leading to a complex landscape of state-level regulations such as California’s Consumer Privacy Act (CCPA).

The executive order by the Biden-Harris Administration represents a significant step towards the creation of a unified framework for AI regulation in the U.S. This initiative can serve as a catalyst for converging various international standards, drawing from successful models like the GDPR and sector-specific policies to establish a comprehensive and harmonized set of regulations.

To ensure regulations enhance innovation, policymakers should incorporate flexible mechanisms like sandboxes, where new AI technologies can be tested under regulatory supervision. Additionally, structures that incentivize companies to adopt ethical AI practices and the use of AI impact assessments similar to the GDPR can support innovation while ensuring that risks are managed.

Conclusion

Throughout this article, we have navigated the intricate landscape of AI regulation, recognizing its significance in safeguarding public welfare while fostering innovation. We discussed the IEEE 1012 standard’s approach to risk assessment and its potential to inform emerging AI regulations, providing a structured methodology for managing risks associated with AI technologies.

We explored a multi-tiered strategy for AI risk management, emphasizing the importance of international collaboration in the formulation of AI governance and the balance between regulatory flexibility and control. The necessity for oversight across the AI system lifecycle and the critical role of independent review to validate AI systems were underscored, ensuring they meet safety and reliability standards.

As we look forward, it is imperative to continue to strive for a harmonious balance between innovation and regulation. The vision for the future is one where AI is not only a catalyst for technological and societal advancement, but also a domain of technology that is managed with foresight and responsibility. AI regulation should be seen not as a hurdle, but as a framework that can guide AI to be developed and used in a manner that is beneficial for all of society, fostering trust and confidence in the technologies that are becoming increasingly integral to our everyday lives. After all, the final question becomes: how can we create a future where AI not only advances our capabilities but also resonates deeply with our human essence, balancing innovation with the wisdom of our collective experience?

Featured Image Source: DALL-E

Disclaimer: The views published in this journal are those of the individual authors or speakers and do not necessarily reflect the position or policy of Berkeley Economic Review staff, the Undergraduate Economics Association, the UC Berkeley Economics Department and faculty, or the University of California, Berkeley in general.